Collected Advice for Doing Scientific Research

by Malte Skarupke

I’ve been collecting advice from various sources about how to do research. I can’t find a good place that collects this, so let’s see if I can create one.

The idea is that this should be something similar as George Polya’s “How to Solve It” but for doing research instead of solving problems. There is a lot of overlap between those two ideas, so I will quote a lot from Polya, but I will also add ideas from other sources. I should say though that my sources are mostly from Computer Science, Math and Physics. So this list will be biased towards those fields.

My other background here is that I work in video game AI so I’ve read a lot of AI literature and have found parallels between solving AI problems and solving research problems. So I will try to generalize patterns that AI research has found about how to solve hard problems.

A lot of practical advice will be for getting you unstuck. But there will also be advice for the general approach to doing research.

1. The Framework

The general framework is that of exploration and exploitation. Exploitation means you are getting more out of old ideas. Exploration means you are looking for entirely new ideas. You may be thinking that doing research is more exploration than exploitation, but it’s actually a mix which contains more exploitation than exploration. Really new ideas get discovered rarely, and most of the work is to realize all the consequences of existing ideas.

The two analogies I like for this are hill climbing and exploring the ocean.

1.1 Hill Climbing

Exploitation is as an effort in hill climbing. Hill climbing comes from the family of AI problems that deal with search, where search means “I’m at point A, I want to get to point B.” For research point A is “here is what I know now” and point B is “here is what I would like to find out/prove/demonstrate/get to work/make happen etc.”

There is a large number of search algorithms, and you only really use hill climbing if your problem has the following criteria: You can’t see very far, the problem is very complex, you don’t know where the goal is and progress is slow. So you can’t just say “I’m going to explore a thousands paths” because exploring one path might take you a week before you find out that it leads to a dead end.

At that point all fancy AI techniques are out of the door and we’re left with simple hill climbing. Luckily AI has several improvements over the simple “go up” approach that will just get you stuck on the first small hill.

1.2 Exploring the Ocean

For the “exploration” part of exploration and exploitation I want to use the analogy of exploring the ocean. You obviously can’t do hill climbing there. You could try to bring a long rope and measure the depth of the ocean, but then you would always just move straight back to the island that you came from. Because if you just left the harbor, in which direction does the floor of the ocean go up? Back into the harbor. You have to cover a large distance before you can do hill climbing.

The “exploring the ocean” analogy is not perfect, because there is a property to this kind of research where the more you’re trying to reach a goal, the less likely you’re going to get there. I guess it works if you have a wrong idea of where the goal is. Like Columbus thinking that India was much closer, and accidentally discovering America.

The best explanation I have found for this is by Kenneth Stanley in his talk The Myth of the Objective – Why Greatness Cannot be Planned. I recommend watching the talk, but if you don’t want to do that I will mention the main points further down.

For now the main point is that there are some discoveries that can only come from free exploration. You find a topic that’s interesting and you go and explore in that direction, without any specific aim other than to find what’s over there. Then at some point you start doing hill climbing to actually get results, but you can’t start off with it.

2. General Approach

In this section I’ll mention the general approach to doing research. You’re probably doing many of these things already because they’re common sense but it’s still worth pointing these things out once. Especially students often get these things wrong, and then it’s good to be able to recognize what they do differently than you, and it’s good to have the words for the common sense.

2.1 Quickly Prove Yourself Wrong

When doing research it’s easy to fool yourself. So it is very important that you go out of your way to prove yourself wrong. Feynman thought this was very important when talking about Cargo Cult science. I’m slightly misquoting him here because he doesn’t just talk about proving yourself wrong, but about a broader scientific honesty:

But there is one feature I notice that is generally missing in Cargo Cult Science. That is the idea that we all hope you have learned in studying science in school – we never explicitly say what this is, but just hope that you catch on by all the examples of scientific investigation. It is interesting, therefore, to bring it out now and speak of it explicitly. It’s a kind of scientific integrity, a principle of thought that corresponds to a kind of utter honesty – a kind of leaning over backwards. For example, if you’re doing an experiment, you should report everything that you think might make it invalid – not only what you think is right about it: other causes that could possibly explain your results; and things you thought of that you’ve eliminated by some other experiment, and how they worked – to make sure the other fellow can tell they have been eliminated.

Details that could throw doubt on your interpretation must be given, if you know them. You must do the best you can – if you know anything at all wrong, or possibly wrong – to explain it. If you make a theory, for example, and advertise it, put it out, then you must also put down all the facts that disagree with it, as well as those that agree with it.

He goes on to talk about more things that you should do, but for now I just want to talk about the part of proving yourself wrong, because it is genuinely helpful when doing research.

It’s the main difference between real research and pseudo-science. People in pseudo-sciences never try to prove themselves wrong. It’s also the main difference between real medicine and alternative medicine. People who promote crystal healing never try to prove themselves wrong. At least not seriously. Same thing between real journalism and conspiracy theorists. A real journalist will try to prove his theories wrong many times before publishing. Especially if it’s about a conspiracy.

But there is a more pragmatic reason: Proving yourself wrong early will save you from wasting time. Of course if you try to do research into a crazy theory like crystal healing, you’re wasting time. But there are also many reasonable cases where the fastest way to find out if an approach will work is to try to prove it wrong.

This can be tricky, because as Feynman also said “The first principle is that you must not fool yourself – and you are the easiest person to fool.” Meaning it’s hard for you to prove yourself wrong. It’s easier for you to fool yourself. But once you get better at proving yourself wrong, you tend to find shortcuts, ways to rule out in a day what would have taken you a month to confirm. It turns out that often you only need rough heuristics to prove yourself wrong where proving yourself right requires actually working all the way through the problem.

You want to be a little bit careful with this because sometimes good ideas lurk in areas that most people stay far away from because of some “probably won’t work” heuristic, but usually those heuristics are a good idea. And even if you don’t want to use heuristics, it’s still often faster to prove yourself wrong than to prove yourself right, so the advice stands.

For some people it’s very hard to prove themselves wrong. There is one final trick that can even help those people: For some reason we are really good at proving other people wrong. If somebody else comes to you with a crazy idea you can immediately tell that it’s a crazy idea. Much more quickly than if it was your own idea. So the final trick is to ask others to prove you wrong. Meaning just ask a colleague to run an idea by them. And then listen to what they say.

2.2 Simulated Annealing

I don’t know a good name for this, so I’ll use the name of the AI technique. You probably do this automatically, but it’s worth pointing out, because sometimes I see people who don’t do this, and they are really screwed.

Simulated Annealing is a very general approach, which roughly says that you should figure out the big picture out before you figure out the details. And it does that by prescribing what your response should be when you’ve walked all the way up in hill climbing and gotten stuck. Getting stuck means you’re at some local peak and it seems like you can’t see any paths that take you any higher. And ideally you’re not just stuck for five minutes, but you’re stuck for an hour or a day or more.

The general pattern is that every time you get stuck, you do a reset. But the size of the reset becomes smaller and smaller. At first you reset your progress completely and start over from the very beginning and try an entirely different approach. After you’ve tried a few different approaches, the next time that you reset yourself, do a smaller reset so that you stay in the area that took you the furthest. Don’t try new approaches any more, but try different variations of one approach. Later you do smaller resets still and maybe just try a few different solutions to specific problems. And at the end you do really small resets and just tweak some numbers.

What this means is that when you first work on a problem, you shouldn’t spend too much time fiddling around with the details. Instead try a different approach.

And then later, when you’re fiddling around with the details, you should not go back and try a whole different approach.

There is a progression to this. You often see inexperienced researches spend too much time on the details early on. Or maybe they have come very far and are already twiddling with the numbers when they find that their whole approach was wrong and they have to start over with an entirely different approach. That is very demoralizing. You have to do that exploration early on before you ever get to the details.

Or sometimes people are just trying lots of different approaches and are never actually doing one approach seriously. Simulated Annealing says that you should walk up until you’re stuck. Don’t switch to a different approach until you’ve gotten stuck. (sometimes that takes too long and you end up spending weeks on one approach. In that case set a time limit and do a reset once a week or so)

So when you first get stuck, do big resets and try entirely different approaches. Then over time do smaller and smaller resets.

This also gives a natural end to your research. Once you’re done twiddling with the details you’re done, period. (you don’t need to go back and try an entirely different approach since you already explored those earlier)

2.3 Be Incremental

This one is not an AI technique but my own observation. It’s also something that all good researches do automatically, but it’s worth pointing out explicitly.

You want to be incremental. In the hill climbing analogy, imagine that there are several paths already carved into the mountains where previous researchers have made progress before you. You almost always want to start off from one of those paths. In fact some of those paths have become very wide because there are lots of researches doing work up at the end of those paths, so the path is well-trod.

You may actually want to avoid paths that are too wide, but only if you are experienced already. If you are a grad student doing your first research, don’t stray too far from where others are.

The advice to be incremental may be disappointing because you want to invent the next big theory like general relativity or the next Internet or whatever. But actually the more you read about those and how they came about, the more you realize that they were actually quite incremental. There are really very few inventions which can not be traced back to ideas that slowly accumulated and evolved over many years. Sometimes an idea seems really impressive to the outside world because to them it’s all new. But then you look at the author’s work and find that they had been silently working on it incrementally for the last ten years.

You may think that the “be incremental” advice does not apply to the “ocean explorer” analogy of research, but you’d be wrong. Few good things have come from just setting off into completely uncharted territory. Usually you want to hop from island to island. The “Myth of the Objective” talk that I talked about above strongly emphasizes how important stepping stones are for this kind of research. The results in their program couldn’t have come about if people couldn’t have built on top of each other’s results.

2.4 Work on Several Things

The AI technique for this is called Local Beam Search (the link is to “Beam Search” because it seems like Local Beam Search is never mentioned online…) which is a variant of hill climbing where we do several searches at the same time. That’s the whole trick. Programmers are not good at naming things.

Doing several searches at the same time is an easy thing to do for a computer, but it’s hard to do for a person. But I think we can get the same benefits without literally doing the searches at the same time. I’m going to quote from the book “Artificial Intelligence – A Modern Approach” (second edition) by Russel & Norvig to list the benefits:

In a local beam search, useful information is passed among the parallel search threads. […] The algorithm quickly abandons unfruitful searches and moves its resources to where the most progress is being made.

So how do we get these benefits as a simple human who can’t do multiple searches at the same time? One thing we can do is keep track of where you walked down one path but you could have walked down the other path. Explore the other paths every once in a while. Since humans have to do this sequentially, it’s actually similar to the simulated annealing I mentioned above. But the idea would be to do multiple searches at once, where each of them follows the simulated annealing approach.

One other approach to this is to always have more than one project. Here is Robert Gallager talking about this in the context of a talk about Claude Shannon:

Be interested in several interesting problems at all times. Instead of just working intensely on one problem, have ten problems in the back of your mind. Be thinking about them, be reading things about them, wake up in the morning, and review whether there’s anything interesting about any of them. And invariably […] something triggers one of those problems and you start working on it.

Why is that so important? I would say that one of the most difficult things in trying to do research is “how do you give up on a problem?” So many students doing a thesis just beat themselves over the head for year after year after year saying “I’ve got to finish this problem, I said I was going to do it and I’m going to do it.” If it’s an experiment you’re going to do, yes you can do that. You can do it more and more carefully if something isn’t working you can fix it to the point where it works. [But] if you’re trying to prove some theorem and the theorem happens to be not true, then your chances of success are very low.

If you have these ten problems in the back of your mind and there is one problem that’s been driving you crazy, what are you going to do? It’s going to sink further and further back in your mind and just because you’ve had more interesting things to do, you’ve gotten away from it. After a year you won’t even remember you were working on it. It will have disappeared. That’s a far better thing than to reason it out and say “I don’t think I can go on any further with this because of this, this and this reason.” Because your problem is you don’t understand the problem well enough to understand why you oughta give up on it. So you just find that you can do other things which become more interesting temporarily.

I think this quote is spot on, but there is an additional benefit to working on several things at the same time: There is lots of cross pollination between ideas. Also talking about Claude Shannon, this article has another good quote about this:

His information theory paper drew on his fascination with codebreaking, language, and literature. As he once explained to Bush:

“I’ve been working on three different ideas simultaneously, and strangely enough it seems a more productive method than sticking to one problem.”

The final angle in which this is helpful is that research just takes time. Some things can’t be hurried. If you work on multiple things at the same time, then that allows you to work on one thing for a longer time. If one project needs to take ten years, then there is no way that you can work on it full time for ten years. But if you also work on other things during those ten years, all of a sudden it’s doable.

2.5 Focused Mode and Diffuse Mode

I’m using Barbara Oakley’s terms from her Learning How to Learn online course.

The idea is that the brain has two distinct ways of working: The focused mode where you actively work on a problem, and the diffuse mode where you’re doing something else entirely but your subconscious is working on the problem. The diffuse mode is responsible for a lot of eureka stories, including the original one: Archimedes was stuck on a problem, trying to figure out whether a crown was pure gold or not. Then on a trip to a bath he is relaxing, mind drifting off, watching the water move, when suddenly the answer jumps into his head.

For me I also often get ideas like this while taking a shower. Some people say that physical exercise helps them get into the right mode. That doesn’t work for me, but long walks certainly do work. Some people say that they only get into this mode when sleeping and that they wake up with good ideas. That works very rarely for me, but maybe you have more luck with it. It seems like you need to be somewhat relaxed, your mind mostly idle. Then the background processes in your brain get to work and can form new connections that weren’t clear before. You have to stop thinking about the problem for a while and then an answer may drift back up from some deeper part of your brain that you don’t have direct access to.

The tricky part is that you can’t easily schedule what your brain is going to work on in the diffuse mode. Distractions like smart phones are really harmful, but even if you turn your phone off you can easily get into a mode where all you can think of is the latest controversy in the news. Rich Hickey talks about how he deals with this issue in his talk Hammock Driven Development: (he talks about sleep because for him a lot of this thinking happens while sleeping)

So imagine somebody says “I have this problem…” and you look at it for ten minutes and go to sleep. Are you going to solve that problem in your sleep? No. Because you didn’t think about it hard enough while you were awake for it to become important to your mind when you’re asleep. You really do have to work hard, thinking about the problem during the day, so that it becomes an agenda item for your background mind. That’s how it works.

For me sleeping doesn’t work, but I’ve certainly found the same thing to be true when going for long walks. If the last thing that I did before the walk was check the news, my brain will keep on going back to whatever I was reading. If the last thing was that I worked really hard on a problem, I may have a chance of finding a solution to the problem while going for a walk. (can’t force it though, you need to allow your mind to drift off and then drift back to the topic)

This is no guarantee for success. Oftentimes the solution of the diffuse mind doesn’t actually work. Or it’s just one step of the solution and after you take that one step you’re just as stuck as you were before. But anytime that I’m making really good progress, it’s a combination of focused mode and diffuse mode work.

2.6 Setting Goals and Changing Goals

This is another one one that is so basic that it’s rarely stated, but I sometimes see people confused about this. For example I was going through the book reviews of Kenneth Stanley’s book about how objectives can be harmful, and one person says that the book is clearly wrong because there are studies about how helpful goal setting is, with a link to this article. And since I believe in proving myself wrong I naturally read that article. It turns out that the professor mentioned in that course, Jordan Peterson, has made the course available on Youtube. And if you listen to what he actually says about setting goals, it’s a lot more compatible with Kenneth Stanley’s idea:

You don’t get something you don’t aim at. That just doesn’t work out. So lots of people aim at nothing and that’s what they get. So if you aim at something you have a reasonable crack at getting it. You tend to change what you’re aiming at a bit along the way, because like, what do you know? You aim there, you’re wrong. But you get a little closer. And then you aim [slightly off to the side], and you’re still wrong. You get a little closer and you aim [in a slightly different direction again], and as you move towards what you’re aiming at, your characterization of what to aim at becomes more and more sophisticated. So it doesn’t really matter if you’re wrong to begin with as long as you’re smart enough to learn on the way, and as long as you specify a goal.

This is spot on as far as I’m concerned. (I had to modify the quote for the aiming because you can’t see him aiming with his arms if you don’t watch the video) You need a goal to start with. But as you travel towards that goal, you may find reasons to change the goal and you shouldn’t be afraid to do that if you have a good reason. He goes on to say that it’s OK to specify a vague goal as long as you’re going to refine that goal along the way. (I think there is also some connection here to Scott Adams’ theory of “using systems instead of goals” but I haven’t thought that through)

Kenneth Stanley developed an algorithm called “Minimal Criterion Novelty Search” after his discovery about how harmful it can be to aim for a goal too rigidly. Novelty Search just tries to visit as many different places in the search space as possible. Meaning it generates novel approaches to whatever problem you’re working on. Doesn’t matter if those novel approaches don’t look like they would solve the problem. “Minimal Criterion” says that the novel behaviors should still behave above some minimum threshold like “don’t get eaten by predators before you reproduce.” You can define your own minimal criterion for your problem, but it shouldn’t be very challenging to overcome. He has then shown that for tricky problems, novelty search is better than goal oriented search because novelty search doesn’t go for the goal and doesn’t get stuck on whatever the “trick” is. It just tries to reach as many different points as possible and will eventually automatically find its way around the trick.

3. Getting Unstuck

The diffuse mode from the last section is a good way to get unstuck. But it’s a bit unreliable, and it needs to be fed. You need to work on the problem hard before the diffuse mode can provide you with a solution. But how do you do that if you’re stuck? And are there any more direct ways to get unstuck? In the hill climbing analogy, imagine you have come across a steep cliff and all you can see is ways back down or sideways.

Some of the advice here is to find good sideways steps that have helped others, other advice is for discovering as many sideways steps as you can. Others are about finding new starting points. It’s all about making movement in the hope that you will come across some hidden path that can take you up again.

3.1 State the Problem

This is the Feynman Algorithm for solving problems:

- Write down the problem.

- Think real hard.

- Write down the solution.

The algorithm is of course a joke because Richard Feynman made physics look so easy. Except that a friend of mine once said that the Feynman algorithm actually worked for him. Since then I have tried it a few times and it has actually really helped me, too. The important step seems to be step 1: Write down the problem. Sometimes we seem to be stuck, but we’re not actually all that clear of what exactly we’re stuck on. Putting it into writing forces us to consider what exactly the problem is, and sometimes just doing that is enough. If it’s not, step 2 has also brought me to the solution. Literally just sitting there staring at the formulation of the problem on the paper. Seems unlikely, but sometimes it works. (because you never actually thought about the explicitly stated problem)

A related solution from computer science is Rubber Duck Debugging. The idea is that if you’re completely stumped on trying to figure out a bug in your code, sometimes it helps to explain it to somebody else. That other person doesn’t actually have to understand what you’re talking about. It just helps talking through the problem. So a rubber duck is good enough for this.

I have to confess that I don’t find it easy to talk to a rubber duck, so I usually try explaining my current problem to my girlfriend. She doesn’t know a whole lot about computer science, but if I say that “I just need to talk through this problem once” then she will usually make an effort to listen. It’s also a good exercise to try to explain the problem in a way that somebody who is not familiar with algorithms and data structures can understand. The goal isn’t really to get her to understand it, but to get myself to talk about the problem fully enough that she could understand it.

Oftentimes that’s all it takes to find the thing that you forgot to check.

One thing that has really helped me on this is George Polya’s book “How to Solve It” which makes you ask yourself these questions:

What is the unknown? What are the data? What is the condition? Is it possible to satisfy the condition? Is the condition sufficient to determine the unknown? Or is it insufficient? Or redundant? Or contradictory?

Draw a figure. Introduce suitable notation.

Separate the various parts of the condition. Can you write them down?

It’s an exercise in getting good about “stating the problem.” And going through it explicitly somehow helps. Polya also points out that sometimes you’re stuck simply because you lost sight of the goal, which is another explanation for why stating the problem helps you get unstuck.

Polya also has a list of proverbs in his book that I will quote sometimes, here are his proverbs for this one:

Who understands ill, answers ill. (who understands the problem badly, answers it badly)

Think on the end before you begin.

A fool looks to the beginning, a wise man regards the end.

A wise man begins in the end, a fool ends in the beginning.

3.2 Simplify the Problem

Here is Claude Shannon about simplifying problems:

Almost every problem that you come across is befuddled with all kinds of extraneous data of one sort or another; and if you can bring this problem down into the main issues, you can see more clearly what you’re trying to do and perhaps find a solution. Now, in so doing, you may have stripped away the problem that you’re after. You may have simplified it to a point that it doesn’t even resemble the problem that you started with; but very often if you can solve this simple problem, you can add refinements to the solution of this until you get back to the solution of the one you started with.

And here is Robert Galager again about an experience when he had a complex problem and asked Claude Shannon for help:

He looked at it, sort of puzzled, and said, ‘Well, do you really need this assumption?’ And I said, well, I suppose we could look at the problem without that assumption. And we went on for a while. And then he said, again, ‘Do you need this other assumption?’ And he kept doing this, about five or six times. At a certain point, I was getting upset, because I saw this neat research problem of mine had become almost trivial. But at a certain point, with all these pieces stripped out, we both saw how to solve it. And then we gradually put all these little assumptions back in and then, suddenly, we saw the solution to the whole problem.

Another thing I like to do for this is to solve the problem for one case. Instead of trying to attack the general problem, pick a simple case and solve it. Then another. Then another. Then another. Then look for patterns. Don’t look for patterns until you’ve solved three or four specific cases. The cases I usually look at are “what if these are all zero?” Or “what if this always takes the same amount of time?” Or “what if everybody wants the exact same thing?” And then further questions are small variations on that like “what if these are all 1? Or what if these are all zero except for that variable?” Or “what if these take different amounts of time but they start at regular intervals?” Or “what if everybody wants the exact same thing except for that one special case?”

Polya in “How to Solve It” of course also has questions for this:

If you cannot solve the proposed problem try to solve first some related problem. Could you imagine a more accessible related problem? A more general problem? A more special problem? Keep only a part of the condition, drop the other part; how far is the unknown then determined, how can it vary? Could you change the unknown or the data, or both if necessary, so that the new unknown and the new data are nearer to each other?

There is a subtle benefit to simplifying the problem which I’ll explain using the concept of “overfitting” from machine learning. Overfitting happens when your algorithm didn’t really learn the underlying pattern, but just memorized all the training examples. (and then doesn’t work on new examples) Overfitting means that you learned both the signal and the noise. One way to make overfitting less likely is to simplify or to generalize because simplifying the problem reduces the noise in the problem. (I will talk about generalizing further down) This is a bit of an abstract concept and probably deserves a fuller discussion (particularly because some simplifications actually increase your risk of overfitting) but for now I just want to say that solving a simplified problem can reveal broader truths than solving a complex problem, so don’t feel bad for simplifying. It can have real benefits.

3.3 Draw a Picture

We already had Polya’s advice of “Draw a picture. Introduce suitable notations” above, but this goes further. We can often use the visual processes in our brain to solve problems.

This applies to many more problems than math problems. Lots of math has geometric interpretations, but so do other fields. You can draw diagrams or plots or maps or simplified sketches or any number of other things.

One trick is to try to visualize as much data as possible. Draw scatter plots. Then draw small multiples of scatter plots. Add layers, colors, work at different scales, anything that allows you to show more data without confusion. Let your eye do the filtering later. While we would have a hard time dealing with thousands of numbers in writing, we have a very easy time finding patterns in thousands of numbers in a scatter plot. Here is a quote from Edward Tufte’s Envisioning Information (page 50):

We thrive in information-thick worlds because of our marvelous and everyday capacities to select, edit, single out, structure, highlight, group, pair, merge, […] focus, organize, condense, reduce, […] categorize, catalog, […] isolate, discriminate, distinguish, […] filter, lump, skip, smooth, chunk, average, approximate, cluster, aggregate, outline, summarize, itemize, review, dip into, flip through, browse, […].

Visual displays rich with data are not only an appropriate and proper complement to human capabilities, but also such designs are frequently optimal. If the visual task is contrast, comparison and choice – as it so often is – then the more relevant information within eyespan, the better. Vacant, low-density displays, the dreaded posterization of data spread over pages and pages, require viewers to rely on visual memory – a weak skill – to make a contrast, a comparison, a choice.

A common theme of Tufte is that we are really good at looking at lots of data. It’s not good to only show parts of the data at a time. Better to show as much as possible, and people will focus on what they want.

Except of course it’s not quite that simple, because if you just show as much as possible, you often have an unreadable mess. Edward Tufte’s books are all about showing as much as possible without having a mess on your hand. But really you can get pretty far by just trying and iterating on your visualizations. Try combining visualizations, then try separating them. Try looking at multiple things next to each other. Try zooming out or try zooming in etc.

3.4 Try to Use a Related Problem

This is one of the main points in Polya’s “How to Solve It.” He thinks mobilizing prior knowledge is one of the most important things you can do. To do this you of course have to be fluent in the field that you’re researching. Here are his questions related to this:

Have you seen it before? Or have you seen the same problem in a slightly different form?

Do you know a related problem? Do you know a theorem that could be useful?

Look at the unknown! And try to think of a familiar problem having the same or a similar unknown.

Here is a problem related to yours and solved before. Could you use it? Could you use its result? Could you use its method? Should you introduce some auxiliary element in order to make its use possible?

This is also a point where it’s useful to work on several things at the same time. Because somehow it seems that formulas or methods or insights from one area often apply in a different area. I don’t know why that is. Maybe there are only a finite number of concepts and connections between them, so we see the same concepts in several fields. (whatever explanation we come up with would also have to explain why garbage-can decision making works so well, so my explanation isn’t very good…) Here is Feynman talking about this:

[After deriving the conservation of angular momentum from the laws of gravity]. And thus we can roughly understand the qualitative shape of the spiral nebulae. We can also understand in the same way the way a skater spins when he starts with his leg out, moving slowly, and as he pulls the leg in he spins faster.

But I didn’t prove it for the skater. The skater uses muscle force. Gravity is a different force. Yet it’s true for the skater. Now we have a problem. We can deduce, often, from one part of physics, like the law of gravitation, a principle which turns out to be much more valid than the derivation.

[…]

So we have these wide principles which sweep across all the different laws. And if one takes too seriously these derivations, and feels that “this is only valid because this is valid” you can not understand the interconnections of the different branches of physics. Some day, when physics is complete, then all the deductions will be made. But while we don’t know all the laws, we can use some to make guesses at the theorems which extend beyond the proof. So in order to understand the physics one must always have a neat balance and contain in his head all the various propositions and their interrelationships because the laws often extend beyond the range of their deductions.

(edited heavily for brevity)

This is true across parts of physics and it’s also true across entirely different fields, but I should also state that most of the time, the “related problems” you want to look at are going to be pretty close by. Polya gives examples like “to find the center of mass of a tetrahedon, see if you can use the method of the simpler related problem of finding the center of mass of a triangle.”

But I also want to bring it back to Polya’s idea of mobilizing prior knowledge: There is a lot of evidence that in most fields, the main difference between experts and novices is how much experience or knowledge of the field they have, and how good they are at organizing this knowledge. This comes out of Kahneman’s and Tversky’s work with expert firemen, but also from research about chess grandmasters. The better somebody gets at chess, the more they use their memory. (as measured by brain activity) So you have to build that pool of knowledge. You have to know lots of related problems and you have to be able to draw connections to them.

3.5 Restate the Problem

This is very related to the previous problem and it’s also something that I can find plenty of quotes for. Here is Claude Shannon for example:

Another approach for a given problem is to try to restate it in just as many different forms as you can. Change the words. Change the viewpoint. Look at it from every possible angle. After you’ve done that, you can try to look at it from several angles at the same time and perhaps you can get an insight into the real basic issues of the problem, so that you can correlate the important factors and come out with the solution.

Polya’s questions about this topic are simpler in that they are simply “Can you restate the problem? Could you restate it still differently? Go back to definitions.”

Polya then goes on to list several reasons for why this helps. One is that a different approach to the problem might reveal different associations, allowing us to find other related problems. (see the point above) A second reason I will just quote:

We cannot hope to solve any worth-while problem without intense concentration. But we are easily tired by intense concentration of our attention upon the same point. In order to keep the attention alive, the object on which it is directed must unceasingly change.

If our work progresses, there is something to do, there are new points to examine, our attention is occupied, our interest is alive. But if we fail to make progress, our attention falters, our interest fades, we get tired of the problem, our thoughts begin to wander, and there is danger of losing the problem altogether. To escape from this danger we have to set ourselves a new question about the problem.

The new question unfolds untried possibilities of contact with our previous knowledge, it revives our hope of making useful contacts. The new question reconquers our interest by varying the problem, by showing some new aspect of it.

See also the point about Simulated Annealing above which says that you should frequently try new approaches. But the size of the change that you make should differ over time.

And finally here is Feynman talking about the same idea. When talking about what if several theories have the same mathematical consequences, he says that “every theoretical physicist that’s any good knows six or seven different theoretical representations for exactly the same physics and knows that they’re all equivalent, and that nobody is ever going to be able to decide which one is right, but he keeps them in his head hoping that they will give him different ideas.” As for how they may help he says that a simple change in one approach may be a very different theory than a simple change in a different approach. And that changes which look natural in one theory may not look natural in another.

3.6 Look at the Data, Sort the Data

This one is related to some of the points that I made in “draw a picture” above, but it’s also worth talking about the data separately, without the context of a picture. To start off with here are Polya’s questions related to this topic:

Did you use all the data? Did you use the whole condition? Have you taken into account all essential notions involved in the problem?

Could you derive something useful from the data? Could you think of other data appropriate to determine the unknown? Could you change the unknown or the data, or both if necessary, so that the new unknown and the new data are nearer to each other?

One thing I would like to point out here is that there are many ways to organize data. I have literally given talks where all I did was take existing data and organized it in a different way to put emphasis on different conclusions. The original authors had organized their data by categories. I had organized it by strength of correlation. There are many ways to sort, filter, group or abstract data, and there are often many different insights to be gained depending on how you go about doing this.

Polya is referring to something else here though. For him the “data” are the information given for a problem. His example problem for this question is “We are given three points A, B and C. Draw a line through A which passes between B and C and is at equal distance to B and C.” And his point is that after drawing a picture of the dots with the desired line, the solution comes almost automatically if you just draw lines using all the available data. (the points A, B and C, as well as the desired line) So for your problem the data may just be any available information.

Changing the data can mean a lot of things from “collect more information” to “if I assume that this variable is always 0, would that simplify the problem?” And changing the unknown means that if the data suggests a different goal, at least consider that other goal. Maybe it’s a better goal than what you were looking for.

3.7 Play Around

Richard Feynman was famous for this because he said that his Nobel prize came directly from playing around with physics. Here is the quote for that and it’s a great read. (unfortunately too long to be included in this blog post)

In the Feynman quote playing around means investigating a problem that has no practical applications. But you can even do this within a problem. You can play around with equations. Do random substitutions. See what the consequences would be if you cube a variable rather than squaring it. Take the equations in a circle and back to where they started. Do anything that you are curious about. You can play around with experiments. If you are working on some variable and normal values are in the range from 20 to 30, then try the values 1 and 100, just to see what happens. If nothing bad happens, try the values 0.1 and 1000. Antibiotics were discovered because an experiment went wrong and Alexander Fleming reacted with curiosity rather than frustration.

Here is a quote from Carver Mead that is in a similar vein to the Feynman story above:

John Bardeen was just the most unassuming guy. I remember the second seminar Bardeen gave at Caltech — I think it was just after he got his second Nobel Prize for the BCS theory, and it was some superconducting thing he was doing. He had one grad student working on it and they were working on this little thing, and he gave his whole talk on this little dipshit phenomenon that was just this little thing. I was sitting there in the front row being very jazzed about this, because it was great; he was still going strong.

So on the way out, people were talking and one of my colleagues was saying, “I can’t imagine, here’s this guy that has two Nobel Prizes and he’s telling us about this dipshit little thing.” I said, “Don’t you understand? That’s how he got his second Nobel Prize.” Because most people, one Nobel Prize will kill them for life, because nothing would be good enough for them to work on because it’s not Nobel Prize–quality stuff, whereas if you’re just doing it because it’s interesting, something might be interesting enough that it’s going to be another home run. But you’re never going to find out if all you think about is Nobel prizes.

3.8 Read a Related Paper

This one is connected to the point about “use a related problem” above, but there is additional value to be gained from reading a related paper that I haven’t talked about above.

Reading a related paper is especially valuable if you try to reproduce the related paper. For me that’s often easy to do in computer science because I can implement the program. If it’s hard to do in your field, don’t be afraid to take shortcuts. (potentially huge shortcuts) You’re not trying to verify the paper, the value of the exercise actually comes from walking in other people’s shoes for a while. See what they did and why they did it. Criticize their ideas and their approach.

If you start from the other paper’s starting point, you will come across plenty of opportunities to do things differently. Maybe one of those different paths can give you an idea for your problem. And different starting points run into different problems, which sometimes allows you to dodge a problem that you ran into. Meaning the problem literally doesn’t even show up just because you came from a different angle.

Another thing I like to do is read old papers. You will be surprised at which alternatives they explored back then. (whatever “back then” means for your field) When a field is young, people are more open-minded. Often, the old alternative theories are obviously ridiculous now, but sometimes there are ideas there that should be revisited. Even if you don’t come across anything like that, I still just get random ideas from exposing myself to naive (but smart) ways of thinking about the problem.

3.9 Start From the End, Working Backwards

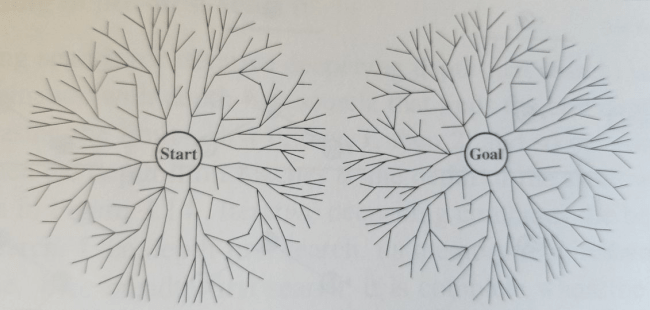

Just as reading a paper is a good exercise for getting a different view point, so is starting from the end. The AI method for this is called bidirectional search, and there are real mathematical reasons for why this helps. Here is the picture for bidirectional search from Russel & Norvig’s “Artificial Intelligence – A Modern Approach”:

To explain this image, imagine we have no idea where the goal is. So we start branching out from the start point, exploring all directions. The longer this keeps on going, the bigger area we have to explore and the more we’ll slow down. If we also search from the goal, we can cut that time down dramatically. Instead of having to make one very big circle, we can make two small circles. In this picture the circles are about to touch, and as soon as they touch it’s an easy exercise to connect the circles and to draw a single path connecting the start to the goal.

With this picture in mind you can also see why so much of the advice above is about finding different starting points: If we had multiple starting points, chances are good that the circles can be even smaller. The further we move on from a starting point the more expansion slows (because the number of paths grows proportional to the area which grows at the square of the radius) so you want to be incremental (pick a goal that’s not too far away) and you may want to try multiple starting points.

Now strictly speaking bidirectional search is not a valid thing to do when doing hill climbing, because in hill climbing we have no idea where the goal is. But usually when doing research you have at least some idea of what you’re looking for or what you expect to find. Or you have some idea of what would overcome the current thing that you’re stuck on. Sometimes it helps to just make goals up. Meaning literally say “it would be really helpful if X was true” and then work backwards, try to figure out what you would need to make X true. Making good guesses as to what are good points to work backwards from is something that takes practice.

3.10 Dive Deeper

We all work at some level of abstraction, but sometimes you need to dive deeper and get into the lower levels. Meaning you need to take apart the machine that you’re working with and put it back together. Hook the sensors up directly to your computer instead of a separate display. (so you can write your own display code) Step through the lower level code. Write your own version of the lower level code. Multiply the equations all the way out. Run through them with real world numbers instead of using abstract symbols.

Meaning do the work that the people who provided you with your tools did.

Here is Bob Johnstone talking about Nobel laureate Shuji Nakamura:

Modifying the equipment was the key to his success… For the first three months after he began his experiments, Shuji tried making minor adjustments to the machine. It was frustrating work… Nakamura eventually concluded that he was going to have to make major changes to the system. Once again he would have to become a tradesman, or rather, tradesmen: plumber, welder, electrician — whatever it took. He rolled up his sleeves, took the equipment apart, then put it back together exactly the way he wanted it. …

Elite researchers at big firms prefer not to dirty their hands monkeying with the plumbing: that is what technicians are paid for. If at all possible, most MOCVD researchers would rather not modify their equipment. When modification is unavoidable, they often have to ask the manufacturer to do it for them. That typically means having to wait for several months before they can try out a new idea.

The ability to remodel his reactor himself thus gave Nakamura a huge competitive advantage. There was nothing stopping him; he could work as fast as he wanted. His motto was: Remodel in the morning, experiment in the afternoon. …

Previously he had served a ten-year self-taught apprenticeship in growing LEDs. Now he had rebuilt a reactor with his own hands. This experience gave him an intimate knowledge of the hardware that none of his rivals could match. Almost immediately, Nakamaura was able to grow better films of gallium nitride than anyone had ever produced before.

(from Brilliant!: Shuji Nakamura And the Revolution in Lighting Technology, p 107)

I want to caution against being too eager about this. You can waste huge amounts of time diving into the deeper levels. There is infinite amount of work down there, and there are reasons why we work at higher levels. The approach for this is to do the smallest dive possible. Only if that doesn’t work should you dive into the lower levels for longer amount of times. (the quote above mentions how Shuji Nakamura was frustrated for three months before he decided to dive deeper. That sounds like a reasonable amount of time)

A related problem is that sometimes you need to doubt the lower levels, but you have to be especially careful about this. But it does happen that the lower level formulas are wrong about something. Even the laws of physics still have holes in them which we have to fill up with Dark Matter and Dark Energy. That doesn’t mean that you should immediately question those laws of physics. You should do the smallest intervention possible and dive one level down. Don’t ever skip levels. Meaning first question whether something in your experiment is wrong. Then question whether your equipment is wrong, then maybe question if a formula from a previous paper is wrong, then slowly work your way down. Only if no higher level mistake can explain your observations should you keep on diving deeper. Think of it as detective work. There are heuristics for what to doubt (“how many known problems does this have?” “how much would break if this changed?”) but you will often follow the heuristics automatically if you just work one level at a time. In computer science this still happens with some regularity, and here is a good read about somebody who did this properly and worked his way slowly through every level until they could conclude that they found a hardware bug.

3.11 Get Some Criticism

Sometimes it helps to show your unfinished idea to someone who is going to hate it. It’s a very unpleasant experience to do this. But if you do this you will hear all the many reasons why your idea can’t possibly work and why you should just abandon it right now. This can do two things: 1. It can actually increase your resolve to fix this problem. (I’ll show that idiot who thinks this can’t be done) 2. It brings up areas that you have avoided so far. Somehow, people who hate your idea are really good at finding open wounds that they can drive their thumb into to hurt you. Often times those open wounds are what you have avoided even though it’s exactly what you should be working on, as unpleasant as that may be. It sucks when somebody tells you “your idea sucks because it can’t deal with X” because you suspect that it’s true and you have unconsciously avoided dealing with X so far. But it can feel great when you then go back and finally tackle X and it turns out that you find a really elegant way to solve that problem, proving the idiot hater wrong and making some progress while you were at it.

The Internet is a great source for this kind of negativity. Sometimes coworkers and friends can identify your problem spots in a nicer way, but the problem with coworkers and friends is that they often have the same mindset as you. You can avoid that by asking new people in your group for advice. You have to get them when they’re still in the “why the heck do we do it like this?” stage, before they have advanced to the acceptance of the “this is just how we do things here” stage. So it’s tricky. (the two stages may not be this obvious) The most reliable way to get criticism is to ask someone who will hate your idea.

I started this section off with making this sound totally sucky. Because it usually is, and to do this you have to be ready for the unpleasant emotions. But this can actually be a more or less sucky experience, depending on who you get the criticism from. When you’re on one side of an argument, it’s easy to find someone on the other side who is a bit of an idiot and then you point and laugh and say “look at how much of an idiot they are on the other side.” That is easier for you to do, but it’s harder to learn from that. You have to put in more work to understand their point. And even though you’ll dismiss it, it will still negatively affect your mood. The better way to go is to find a smart person on the other side who can articulate themselves well. Ideally they can even state your viewpoint pretty well and can still tell you why their side is right. It’s easier to learn from those people, but you won’t naturally seek them out because you’ll learn all the parts where you are wrong.

3.12 Clean Up

Unfortunately I lost my sources for this. Somebody pointed out that when a machine is broken and you can’t figure out how to get it to work again, it helps to clean the machine. Somehow clean machines are easier to fix. The same thing is true for code. Clean up the code and try fixing it again.

Part of the value in this is that while you’re cleaning up you get more familiar with the code. If you see something like “this loop could be simplified without changing the result” and then you actually do the simplification, you’ll get more familiar with the code. Sometimes you stumble on things that actually needed to be complicated, revealing some detail that you hadn’t considered before. But usually when you do this nothing special happens. You just clean up and spend a bunch of time with the code. But that alone sometimes helps give you new ideas.

A relevant link for this is this article. It talks about a reality TV show where a successful businessman helps turn struggling businesses around. And the article notices how he spends a surprising amount of time just cleaning up. So it helps in other areas, too.

3.13 Do Something Stupid

If you’re out of all other options, sometimes you should just do something stupid.

Do something that would never work. Do something that might work, but it’s obviously inefficient or inelegant. Add five special cases. Do something hand-wavy that would never survive peer-review. Assume something that you can’t justify assuming. Do something where you already know three cases where it won’t work. Sometimes those surprise you by unexpectedly working or by giving you an answer that is almost right.

Do you have an idea that probably won’t help and it involves going through fifty cases that take an hour of tedious work each? Sometimes you just gotta do it, even if it probably won’t help. Repetition helps understanding, so maybe you will discover a new angle. Or maybe you will find ways to automate the work.

If all of the other advice for getting unstuck hasn’t helped, doing something stupid can help. In the hill climbing analogy it’s taking a step downhill. Or spending way too much work on a sideways step. The idea is to specifically do what you have tried to avoid doing. Obviously don’t do this as your first attempt at getting unstuck. But this has helped me on past problems, because when I intentionally made a problem worse, it did get worse but not in the way I expected. And that helped me understand why my previous attempts at solving the problem all had no effect.

If after this last point you’re still stuck, maybe try being more incremental. Maybe the thing you’re trying to do is just not ready to be tackled yet. Find a half-way goal and aim for that. Otherwise I’ll talk about making progress next, and there may be more hints there.

4. Making Progress

In this part I will talk about the normal day to day things that you should do all the time. Why didn’t I put this before the “getting unstuck” section? Because getting unstuck is more interesting and now that I have your attention, I can spend it on making you read things that you should do every day.

Polya’s book “How to Solve It” has a chapter called “Wisdom of Proverbs” in which he talks about some of these always applicable things using proverbs. I kinda like that. It’s cute. So I will quote his proverbs when appropriate.

4.1 Go Up

This one is trivial, because this is what we have been talking about for the whole list. Going up means taking one step towards your goal.

Even though this is obvious, I often catch myself doing this wrong. I’ll be thinking way too much about all the possible paths I could take and which problem I would encounter where, that I never actually end up doing a step. For me as a programmer a step may just mean “start writing some code.” (and don’t worry too much about organizing for now) Or it may just mean “work through a few cases” or just doing anything that gets you to actually do something as opposed to just thinking about it. Doing helps with thinking. I’ve found that solutions just come automatically as soon as I start working. Half the problems I worried about never actually show up. Half of the remaining problems end up being simple. Just start doing a step that seems to go uphill. (there’s actually an AI technique for this called Stochastic Hill Climbing which relies on the same insight that sometimes it’s too much work to find the best path and you should just choose any path)

4.2 Be Lucky

Being lucky is a skill that you can learn. And it’s actually a fairly easy skill to learn. That may sound surprising to some people (especially to unlucky people) but it’s true. Here, Richard Wiseman writes about his research into luck. What he did is he found people who thought of themselves as especially lucky or especially unlucky and he asked them a lot of questions. Here are a few excerpt from the article that gives you a good idea for what he found:

I gave both lucky and unlucky people a newspaper, and asked them to look through it and tell me how many photographs were inside. On average, the unlucky people took about two minutes to count the photographs, whereas the lucky people took just seconds. Why? Because the second page of the newspaper contained the message: “Stop counting. There are 43 photographs in this newspaper.” This message took up half of the page and was written in type that was more than 2in high. It was staring everyone straight in the face, but the unlucky people tended to miss it and the lucky people tended to spot it.

For fun, I placed a second large message halfway through the newspaper: “Stop counting. Tell the experimenter you have seen this and win £250.” Again, the unlucky people missed the opportunity because they were still too busy looking for photographs.

[…]

And so it is with luck – unlucky people miss chance opportunities because they are too focused on looking for something else. They go to parties intent on finding their perfect partner and so miss opportunities to make good friends. They look through newspapers determined to find certain types of job advertisements and as a result miss other types of jobs. Lucky people are more relaxed and open, and therefore see what is there rather than just what they are looking for.

My research revealed that lucky people generate good fortune via four basic principles. They are skilled at creating and noticing chance opportunities, make lucky decisions by listening to their intuition, create self-fulfilling prophesies via positive expectations, and adopt a resilient attitude that transforms bad luck into good.

[…]

In the wake of these studies, I think there are three easy techniques that can help to maximise good fortune:

- Unlucky people often fail to follow their intuition when making a choice, whereas lucky people tend to respect hunches. Lucky people are interested in how they both think and feel about the various options, rather than simply looking at the rational side of the situation. I think this helps them because gut feelings act as an alarm bell – a reason to consider a decision carefully.

- Unlucky people tend to be creatures of routine. They tend to take the same route to and from work and talk to the same types of people at parties. In contrast, many lucky people try to introduce variety into their lives. For example, one person described how he thought of a colour before arriving at a party and then introduced himself to people wearing that colour. This kind of behaviour boosts the likelihood of chance opportunities by introducing variety.

- Lucky people tend to see the positive side of their ill fortune. They imagine how things could have been worse. In one interview, a lucky volunteer arrived with his leg in a plaster cast and described how he had fallen down a flight of stairs. I asked him whether he still felt lucky and he cheerfully explained that he felt luckier than before. As he pointed out, he could have broken his neck.

I can’t overstate how important this stuff is. Half of the advice from this blog post is due to me being lucky. For example the way I found Kenneth Stanley’s great talk “Why Greatness Cannot Be Planned: The Myth of the Objective” was that I was following Bret Victor on Twitter (or maybe it was from the RSS feed of his quotes page) because he is a constant source of new perspectives. That lead me to watch this talk by Carver Mead about a new theory of gravity. Which I watched even though I have no reason at all to look into this. I barely know any physics. But come on, a new theory of gravity. And it’s supposed to be simpler than Einstein’s theory while still making all the same predictions. That’s interesting. Then I went to find out more about the conference that that talk was given at and finally stumbled onto Kenneth Stanley’s talk.

None of these steps have any obvious practical benefit for me, but they lead me to a great talk, which coincidentally has the best demonstration I have ever seen about why you should behave in exactly this way.

Being lucky can mean that you never actually find what you’re looking for. You may find something else entirely. The list of scientific discoveries that were made “accidentally” is long. But you need to learn to be lucky, otherwise you will miss those chances when you encounter them.

Here are Polya’s proverbs for this topic:

Arrows are made of all sorts of wood.

As the wind blows you must set your sail.

Cut your cloak according to the cloth.

We must do as we may if we can’t do as we would.

A wise man changes his mind, a fool never does.

Have two strings in your bow.

A wise man will make more opportunities than he finds.

A wise man will make tools of what comes to hand.

A wise man turns chance into good fortune.

4.3 Show Up

The title of this section is referring to the Woody Allen quote “80 percent of success is showing up”. This means showing up to work every day and working on a problem. Thomas Edison is supposed to have said that “ninety per cent of a man’s success in business is perspiration.”

“Showing up” can be more broadly applied: Show up to conferences. Show up to lunch with coworkers because that’s where you will have good discussions. Show up to dinner parties because that’s where you might meet people who can give you fresh ideas. Write the papers you’re supposed to write. Read the papers you’re supposed to read.

Part of this is to “be lucky” as in the point above. You can’t be lucky if you don’t show up. So you also want to get yourself into environments where you can show up to all these events. There is a reason why good research rarely comes out of some small town in the middle of nowhere: There are not enough opportunities to show up to out there. You want to at least live in a college town or a big city.

Here is Polya’s list of proverbs for this section:

Diligence is the mother of good luck.

Perseverance kills the game.

An oak is not felled at one stroke.

If at first you don’t succeed, try, try again.

Try all the keys in the bunch.

One final thing that I should point out is that I intentionally didn’t call this section “work hard.” I think that “show up” is a better advice. This is not about working 80 hour weeks. It’s about showing up to work on a problem every day.

4.4 Improve Iteration Times

The term “iteration time” is a standard term in video game development which roughly measures how much time passes between being finished with a change and seeing the change in the game. So for me as a programmer I make a change, then I have to compile the code, launch the game, get to a point where I can test my change and then test my change. Let’s say compiling takes ten seconds, launching the game takes twenty seconds, and getting to my test setup takes another ten seconds, then my iteration time is 40 seconds. So if I decide to make another small change, I have to wait another 40 seconds before I can see the result. If I can cut the compile time in half then my iteration time is just 35 seconds, which is a good improvement. If I can create a test setup that doesn’t require the whole game to boot then maybe I can get my iteration time down to just 15 seconds.

At the beginning of this blog post I talked about how research is often characterized by slow progress. Exploring one path might take you a week before you find out that it’s a dead end. You shouldn’t just accept that. You should find ways to reduce that time.

Improving iteration time helps in many non-obvious ways: If you can improve iteration times, you can make it cheaper to make mistakes. If an experiment takes you two hours, you probably don’t want to make a mistake and you’ll be very careful. If you can do the experiment in a minute, then some mistakes are OK and you can play around more. But even if you just reduce it from two hours to one hour and 45 minutes, that will still improve your work a little bit. And maybe you can find more improvements after that.

Now you have to invest time to save time, so sometimes it’s not worth it. But sometimes you’ll be surprised. I’ve had an argument about improving iteration times below two seconds. The other person argued that if your iteration time is only two seconds, how much time are you going to save by reducing the iteration time to one second? (and how much effort do you need to invest to achieve a 50% reduction?) But what happens is that when you reduce iteration times, you work differently. If your iteration time is milliseconds, all of a sudden you can work entirely differently. You can try several alternatives per second and create an interactive animation showing the alternatives. You can try different parameters in real time and see what happens. You can show several different variations of the problem on the screen at the same time. At some point you can write a program that just explores a million options and gives out the best one. (but then ironically that program would have slow iteration times, so maybe an interactive tool would be better)

Improving iteration times is a lot about automation, but often it’s also just about being observant as to where you are losing time. You can apply a lot of lessons from factories here. Standardize processes, specialize, batch your work etc. Also if you don’t know how to program, then you should probably learn how to. It’s easier now than it ever was. And to automate simple tasks like “entering numbers into an excel sheet” you don’t need a full computer science education.

4.5 Generalize

Here is something you should do whenever you’re finished with a step: See what the implications of that step are beyond the specific step. See if it has broader applications. Here is Claude Shannon talking about this:

Another mental gimmick for aid in research work, I think, is the idea of generalization. This is very powerful in mathematical research. The typical mathematical theory developed in the following way to prove a very isolated, special result, particular theorem – someone always will come along and start generalizing it. He will leave it where it was in two dimensions before he will do it in N dimensions; or if it was in some kind of algebra, he will work in a general algebraic field; if it was in the field of real numbers, he will change it to a general algebraic field or something of that sort. This is actually quite easy to do if you only remember to do it. If the minute you’ve found an answer to something, the next thing to do is to ask yourself if you can generalize this anymore – can I make the same, make a broader statement which includes more – there, I think, in terms of engineering, the same thing should be kept in mind. As you see, if somebody comes along with a clever way of doing something, one should ask oneself “Can I apply the same principle in more general ways? Can I use this same clever idea represented here to solve a larger class of problems? Is there any place else that I can use this particular thing?”

In this talk Clay Christensen points out that generalizing makes it easier to prove yourself wrong. When you generalize your concept, you have more examples to test it against, and you can use those examples to improve your theory. If you test it against a new example and your theory doesn’t work, you have to either define the limits of your theory, or you have to explain why it sometimes behaves differently. (and then sometimes these new explanation help explain oddities in your original data)

There is also a quote by Feynman which I can’t find right now where he essentially says “if you’re not generalizing, then what’s the point?” With the reason being that the only way that science progresses is to make guesses beyond the specifics of what we observed.

One word of caution about this is that you can also be over-eager about this. Don’t try to find a pattern if all you have is two examples. (or god forbid only one example) You usually want to generalize after you’ve seen three or four examples of something. Of course the trick is in recognizing that three apparently different things are actually examples of the same thing.

4.6 Momentum/Flow

This is another thing that you probably do automatically, but it’s worth pointing out: When you’re moving forward, you should try hard to keep moving forward. The usual example of this is when you were stuck for a while: Once you’re over the hurdle, you should keep working on the thing that got you over the hurdle, because you can probably make more progress there.

Csikszentmihalyi talks about the concept of “Flow” in relation to this, which is a highly focused mental state that you enter when you’re doing concentrated work. You want to stay in that state.

The easiest way to get this wrong is to get stuck on small bumps. There are plenty of small speed bumps along the way that will just slow you down. If there is something in a paper that you don’t understand, ignore it and keep reading. Maybe it will become clear later. If you already know something to be true, but proving it is tricky, skip over the proof. You can fill in the gaps later. If you’re working an algorithm but an edge case is driving you nuts, don’t handle the edge case now. Just solve the cases that you actually need.

It’s important that you revisit each of these points later to fill in the gaps (because sometimes good discoveries hide in small irregularities) but you shouldn’t let a small speed bump stop you when you were making good progress before.

Here are Polya’s proverbs for this, the first one being ironic:

Do and undo, the day is long enough.

If you will sail without danger you must never put to sea.

Do the likeliest and hope the best.

Use the means and God will give the blessing.

4.7 Slow Down and Check Every Step

This is the opposite advice of the previous point, but what can I say. Sometimes you gotta keep on moving forward, sometimes you have to be careful. Often you have to do both.

You can waste a huge amount of time if you mess up a step and never notice.

Polya’s questions for this are “Carrying out the plan of the solution, check each step. Can you see clearly that the step is correct? Can you prove it?”

You get better at this with experience. As you gain more experience, you will just intuitively avoid problems. So if it looks like a very experienced person isn’t checking every step, it may just be that they have taken steps like this a thousand times before.

On the other hand for me personally I feel like I’ve become more and more careful the more I have programmed. My changelists these days tend to be smaller than they used to be. I rarely make huge changes nowadays. Instead I try to make many smaller steps, each of which I can reason about.

The other thing I’d like to mention in this context is that sometimes slowing down can help. Sometimes if you have to make a decision, it’s best to wait for a while before making it. Try to work around it and get a better lay of the land. This is why procrastination sometimes works. Sometimes with delay the correct choice becomes clear. Sometimes all you’re doing is delaying though…

Polya’s proverbs for this section are these:

Look before you leap.

Try before you trust.

A wise delay makes the road safe.

Step after step the ladder is ascended.

Little by little as the cat ate the flickle.

Do it by degrees.

4.8 Do Experiments

I added this point fairly late to this list because it’s so obvious. Of course you’re doing experiments. But then I found myself forgetting to do this often. And I would spend days going down a wrong path based on an assumption that I could have falsified quickly if I had just done a simple experiment.

Experiments are well known in classical scientific fields, but in programming they have different names. Unit tests can be experiments. Benchmarks are experiments. Visualizations can be experiments.

Experiments don’t necessarily have to be rigorous. Their value mostly comes from trying to isolate a problem. It helps with iteration times, it helps with momentum, but it also helps with slowing down and checking every step. It also helps you to clarify your thinking, because in order to create an experiment that isolates a problem, you have to think about the problem. But if I ask myself “can I come up with an experiment for this” the answer is “yes” surprisingly often, and they can save a lot of time from not going down a wrong path.

4.9 Work With Others

This is a point that I can’t possibly do justice to. Whole books have been written about how to form effective teams, so my advice in a blog post like this has to be hopelessly incomplete.

Research has the curious character where it’s often better when done by yourself. Kenneth Stanley has an amazing illustration of the damage that committees can do to research in his talk. (same talk that I keep referring to) If you have to constantly justify what you’re doing, you won’t do the exploration that’s necessary to actually get anywhere. Yet at the same time none of the pictures that he shows in his talk are the result of people working alone. So how do we square that circle?

Research about effective teams has shown that one of the most important things is emotional safety. You should be safe to speak up, safe to ask stupid questions, safe to follow hunches, safe to take a risk, and safe to admit mistakes. If you make decisions by committee, none of these things are true because you have to constantly justify what you are doing, and you have to constantly compete with others to make sure that your priority is still everyone’s priority.

One piece of advice that I like for this is the practice of “Yes, And” from improv comedy. If somebody has an idea, you can’t say “no that’s stupid.” (or use a more subtle way to shut it down) You have to say “yes”, and you have to add something to it to keep the idea alive. I have the idea from this talk by Uri Alon, who gives the following example:

We were stuck for a year trying to understand the intricate biochemical networks inside our cells, and we said, “We are deeply in the cloud,” and we had a playful conversation where my student Shai Shen Orr said, “Let’s just draw this on a piece of paper, this network,” and instead of saying, “But we’ve done that so many times and it doesn’t work,” I said, “Yes, and let’s use a very big piece of paper,” and then Ron Milo said, “Let’s use a gigantic architect’s blueprint kind of paper, and I know where to print it,” and we printed out the network and looked at it, and that’s where we made our most important discovery, that this complicated network is just made of a handful of simple, repeating interaction patterns like motifs in a stained glass window.

(the term “being in the cloud” is what I would call being stuck in a local maximum using the hill climbing analogy)